Understanding privacy risk with k-anonymity and l-diversity

Imagine you’re a data analyst at a global company who’s been asked to provide employee statistics for a survey on remote working and distributed teams. You’ve extracted the relevant employee data, but sharing it as-is could violate privacy laws. How can you anonymize the data while ensuring it’s still useful? In this article, you’ll learn about k-anonymity and l-diversity—two valuable techniques in privacy engineering to help you reduce the privacy risk in datasets.

First, let’s look at the data you’ve extracted from your internal HR system:

| full_name | country | tenure_years | department | |

|---|---|---|---|---|

| John Smith | jsmith@… | USA | 5 | Sales |

| Maria Garcia | mgarcia@… | USA | 3 | Marketing |

| Yuki Tanaka | ytanaka@… | Japan | 7 | Engineering |

| Hans Mueller | hmueller@… | Germany | 2 | Finance |

| Sarah Johnson | sjohnson@… | UK | 5 | HR |

| Pierre Dubois | pdubois@… | UK | 3 | Sales |

| Li Wei | lwei@… | China | 7 | Engineering |

| Anna Kowalski | akowalski@… | USA | 2 | Marketing |

| Eva Schmidt | eschmidt@… | Germany | 5 | Finance |

| Priya Patel | ppatel@… | UK | 3 | HR |

The data identifies individual employees by name and email, so sharing it with a third party may violate privacy laws.

A first attempt at anonymization

Fortunately, the survey partner doesn’t need this level of specificity. Let’s start by removing all fields that directly identify individual employees, such as full_name and email.

Next, we’ll attempt to further de-identify the individuals by aggregating individual rows into groups.

| country | tenure_years | departments | count |

|---|---|---|---|

| USA | 0-5 | Sales, Marketing | 3 |

| Japan | 6-10 | Engineering | 1 |

| Germany | 0-5 | Finance | 2 |

| UK | 0-5 | HR, Sales | 3 |

| China | 6-10 | Engineering | 1 |

There, we’ve removed the names and emails—but is new dataset truly anonymous? Assume that an attacker knows that Yuki Tanaka lives in Japan. With this dataset, they could infer that Yuki works in Engineering and roughly how long he’s been with the company. Even though a dataset doesn’t directly identify an individual, they may still be identifiable.

It’s clear that our first attempt isn’t enough to reasonably protect the privacy of the employees. Next, we’ll see how we can further reduce the privacy risk with k-anonymity.

K-anonymity

K-anonymity is a data anonymization technique ensuring that for each combination of quasi-identifying attributes (such as country and tenure), there are at least k rows that share those exact values.

The k is a number we choose. For example, if k=2, the data is considered to be 2-anonymous. A higher k value provides more privacy.

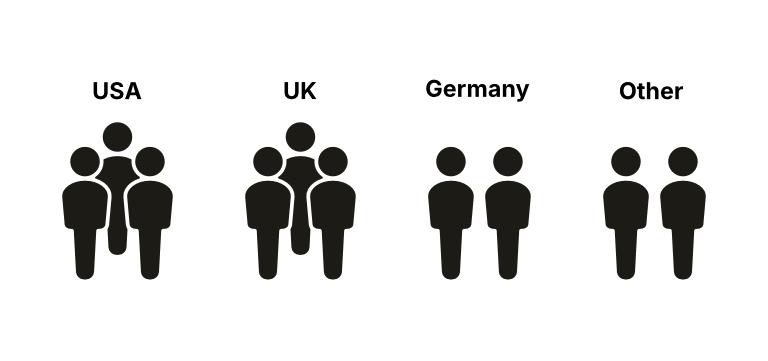

Example with k=2

By setting k=2, we’re saying that each combination of quasi-identifiers must appear at least twice in the dataset.

Here’s how our data looks after applying k=2 anonymity:

| country | tenure_years | department | count |

|---|---|---|---|

| USA | 0-5 | Sales, Marketing | 3 |

| UK | 0-5 | HR, Sales | 3 |

| Germany | 0-5 | Finance | 2 |

| Other | 6-10 | Engineering | 2 |

For k = 2, each group contains at least two employees.

Notice how Japan and China are now grouped as “Other”. Since both of them only have one employee, we had to combine them for all combinations to have a count of 2 or more.

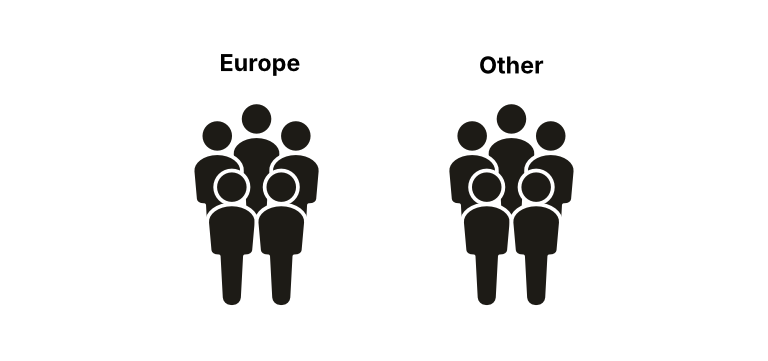

Example with k=5

If a higher k means improved privacy, why don’t we just set it to a really big number? Let’s see what happens when we increase k to 5—each combination of quasi-identifiers appearing at least five times:

| continent | tenure_years | department | count |

|---|---|---|---|

| Europe | 0-5 | HR, Sales, Finance | 5 |

| Other | 0-10 | Engineering, Sales, Marketing | 5 |

For k = 5, only two groups remain.

It’s now much harder to identify individual employees in the dataset. But as a result, we had to remove so much information that it lost its usefulness. Any employee outside of Europe is grouped under “Other”, and the range of tenure becomes so big that we may as well remove it altogether.

Lower k values increase specificity, while higher k values offer better privacy protection. The best value for k depends on your dataset and how sensitive your data is.

L-diversity

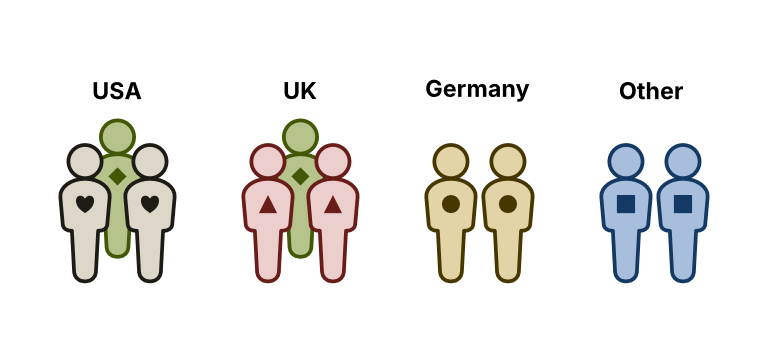

Let’s assume we choose k=2 so that all combinations occur at least twice. Unfortunately, it still fails to protect employees in Germany, Japan, and China.

Since all the employees in Asia work in Engineering, we’ve done little to protect Yuki’s privacy. You may also have realized that it doesn’t actually matter how many employees are in the Other group if all of them are in Engineering. The problem isn’t the size of the group, but the lack of diversity in the departments column.

This is where l-diversity comes in. L-diversity builds on k-anonymity to provide even more privacy by ensuring a given level of diversity in sensitive attributes within each group.

The Germany and Other groups aren’t diverse enough for l = 1.

L-diversity ensures that there’s sufficient diversity within each combination of quasi-identifiers. For example, l=5 means that within each group, there are at least 5 well-represented values for the sensitive attribute (in this case, department).

In our example, one way to achieve l=2 is to move all employees in Germany into the Other group. Note that, as a result, the dataset is now 3-anonymous.

| country | tenure_years | department | count |

|---|---|---|---|

| USA | 0-5 | Sales, Marketing | 3 |

| UK | 0-5 | HR, Sales | 3 |

| Other | 0-10 | Finance, Engineering | 4 |

k = 3 and l = 2

Now, each group has at least two different department values, satisfying l-diversity with l=2. Even if someone knew Yuki was based in Japan, it’s no longer trivial to deduce that he’s in Engineering. Unfortunately—just like for k=5—the tenure range (0-10) is likely too wide to be useful.

As you can see, setting the values or k and l involves balancing data utility against privacy protection. It also illustrates an important point: using k-anonymity and l-diversity doesn’t guarantee the absence of privacy risk.

Limitations and considerations

K-anonymity and l-diversity allow us to communicate privacy risk in a dataset. However, as we saw throughout the article, these techniques have limitations:

- Homogeneity attacks: An attacker can still infer sensitive information if all sensitive values within a k-anonymous group are the same.

- Background knowledge attacks: Additional information might allow attackers to narrow down possibilities.

- Skewness attacks: Even with l-diversity, if one value is much more frequent, high-probability inferences are possible.

- Similarity attacks: If the sensitive values in a group are semantically similar, it may still allow harmful inferences.

- Data utility trade-off: As we increase privacy protections, we often lose some of the data’s usefulness or specificity.

In practice, it may be impossible to completely eliminate the risk of re-identification without rendering the data useless in the process. The best way to remove privacy risk is to avoid collecting or sharing the information in the first place.

Learn more

To learn more, I recommend the Data Privacy Handbook by Utrecht University. In addition to k-anonymity and l-diversity, it also covers t-closeness—another technique to further reduce privacy risk—along with videos on each technique.

ARX is an open source tool for anonymizing sensitive personal data. It supports a range of data anonymization techniques, including k-anonymity and l-diversity.

Also, if you’re using Google Cloud Platform, check out their Sensitive Data Protection which lets you compute k-anonymity and l-diversity on your datasets.

Conclusion

As data professionals, we need to balance privacy risks and data utility when sharing sensitive data. K-anonymity and l-diversity are two data anonymization techniques that can help you reason and make conscious decisions about the privacy risks for a dataset.

Unfortunately, it may be close to impossible to guarantee that an attacker won’t be able to re-identify individuals in a dataset. Data anonymization techniques should only be used as one part of a larger, comprehensive privacy program.

Edit 2024-11-11: Added ARX to the list of resources. Thanks to FjordWarden for the recommendation!

Comments

Leave a comment by replying to the post on Bluesky or Mastodon.